NVIDIA researchers teach dexterity to robot hand via simulation

By DE Staff

Automation Machine BuildingIsaac Gym robotics simulator trains end-effector dexterity 10,000 times faster than in real world, company says.

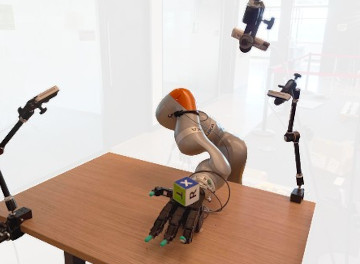

NVIDIA’s DeXtreme project paired an affordable robotic hand, three off-the-shelf RGB cameras, and a 3D printed cube, with its Isaac Gym virtual simulation environment, to train robot hand dexterity 10,000 times faster than in the real world.

(Photo credit: NVIDIA)

One approach to boost dexterity is a technique called Deep Reinforcement Learning (RL), which trains a neural network to control the robot’s joints through trial and error. Unfortunately, this technique can require millions or even billions of samples to learn from.

To get around this limitation, and save wear and tear on a physical robot, NVIDIA researchers in the company’s DeXtreme project created the NVIDIA Isaac Gym, an RL training robotics simulator that obeys the laws of physics but can train a robot 10,000 times faster than in the real world, the company says.

Using Isaac Gym, NVIDIA researchers taught a robot hand how to manipulate a cube to match a provided target position and orientation solely in simulation before transplanting that control programming to a real-world robot.

Unlike previous attempts that required an expensive robot hand, an instrumented cube and a supercomputer, NVIDIA’s DeXtreme project cut hardware requirements down to off-the-shelf components. The project used a four-fingered Allegro Hand, paired with standard RGB cameras and a 3D-printed cube with stickers affixed to each face.

To train this setup, the researchers used its GPU-accelerated simulation environment Isaac Gym for reinforcement learning. NVIDIA PhysX simulates the world on the GPU, and results stay in GPU memory during the training of the deep learning control policy network. As a result, training can happen on a single Omniverse OVX server. According to the company, training using this system takes about 32 hours, equivalent to 42 years of a single robot’s experience in the real world.

According to the DeXtreme project research team, breakthroughs in robotic manipulation will enable a new wave of robotics applications beyond traditional industrial uses. By demonstrating that simulation and low-cost hardware can be an effective tool for training complex robotic systems, they hope to inspire others to build on their work.

https://dextreme.org