Engineers develop algorithm to program tiny robots to move, think like insects

Staff

Automation algorithm Cornell University machine learningCornell University team explores a new type of programming using neuromorphic computer chips to mimics the way an insect's brain works.

You can create a tiny, insect-like robot, but can you programme the device to act like one?

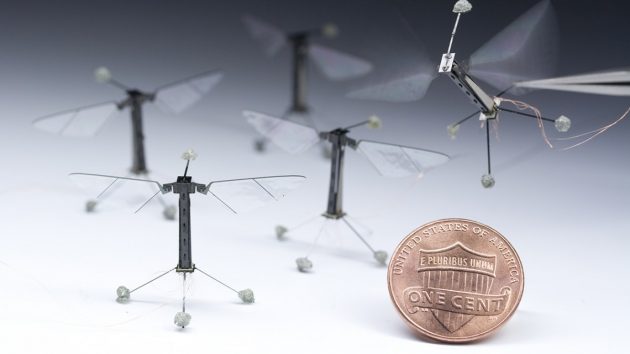

RoboBees manufactured by the Harvard Microrobotics Lab will soon fly and mimic insect “thinking” thanks to Cornell engineers developing new programming that will make them more autonomous and adaptable to complex environments. (Photo courtesy of Cornell University).

That is one of the questions engineers at Cornell University are addressing in their latest experiment which looks at a new type of programming that mimics the way an insect’s brain works.

When it comes to getting a robot to act and “think” like the insect they were designed to mimic, the robot would need to carry a desktop-size computer on its back.

The Cornell team believes they have a better way to get these insect-like robots to move and think through the use of neuromorphic computer chips, which will enable engineers to shrink a robot’s payload, explains Silvia Ferrari, professor of mechanical and aerospace engineering and director of the Laboratory for Intelligent Systems and Controls.

Unlike traditional chips that process combinations of 0s and 1s as binary code, neuromorphic chips process spikes of electrical current that fire in complex combinations, similar to how neurons fire inside a brain.

Ferrari’s lab is developing a new class of “event-based” sensing and control algorithms that mimic neural activity and can be implemented on neuromorphic chips.

The team has partnered with the Harvard Microrobotics Laboratory, which has developed an 80-milligram flying RoboBee outfitted with a number of vision, optical flow and motion sensors.

The Cornell algorithms will help make RoboBee more autonomous and adaptable to complex environments without significantly increasing its weight.

“We’re developing sensors and algorithms to allow RoboBee to avoid the crash, or if crashing, survive and still fly,” said Ferrari. “You can’t really rely on prior modeling of the robot to do this, so we want to develop learning controllers that can adapt to any situation.”

The team is speeding this learning process through a virtual simulator created by Taylor Clawson, a doctoral student in Ferrari’s lab. The physics-based simulator models the RoboBee and the instantaneous aerodynamic forces it faces during each wing stroke. This enables the model to accurately predict RoboBee’s motions during flights through complex environments.

“The simulation is used both in testing the algorithms and in designing them,” said Clawson, who helped has successfully developed an autonomous flight controller for the robot using biologically inspired programming that functions as a neural network.

Aside from greater autonomy and resiliency, Ferrari said her lab plans to help outfit RoboBee with new micro devices such as a camera, expanded antennae for tactile feedback, contact sensors on the robot’s feet and airflow sensors that look like tiny hairs.

“We’re using RoboBee as a benchmark robot because it’s so challenging, but we think other robots that are already untethered would greatly benefit from this development because they have the same issues in terms of power,” said Ferrari.

The Harvard Ambulatory Microrobot, a four-legged machine just 17 millimeters long and weighing less than 3 grams, is already benefitting from Ferrari’s research.

It can scamper at a speed of .44 meters-per-second, but Ferrari’s lab is developing event-based algorithms that will help complement the robot’s speed with agility.