The Future of AI Ain’t What It Used To Be

By Ralph Grabowski

CAD/CAM/CAEDespite its present hype, artificial intelligence integration with CAD software is more miss than hit.

Artificial intelligence is 65 years old. It began as a 1950s computer programming exercise, resulting in the LISP programming language, among others. Since then, AI endured decades-long cycles of summers and winters.

Back in 1970, for instance, AI pioneer Marvin Minsky proclaimed, “From three to eight years, we will have a machine with the general intelligence of an average human being.”

We didn’t.

With AI’s 2023 resurgence through ChatGPT, the tech industry hopes AI will give it the sales boost denied by earlier debacles like gorilla-themed NFTs, crypto exchange failures and the lackluster adoption of augmented reality goggles.

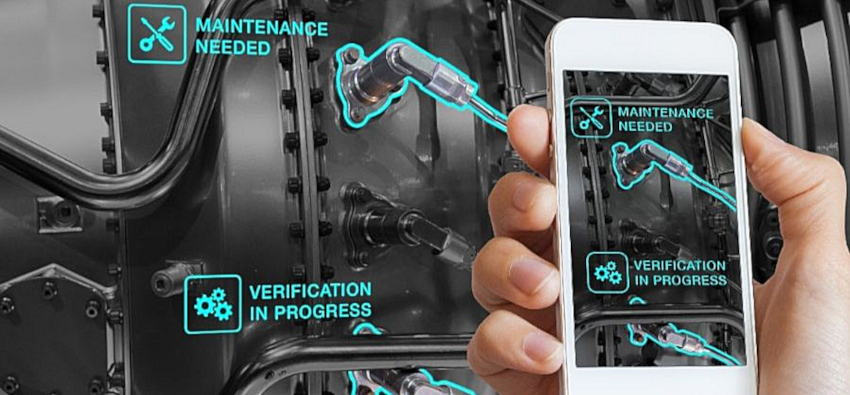

PTC employs AI in its augmented reality software to pinpoint possible trouble spots.

(Photo credit: PTC)

The CAD business is mature, and so it is always looking for the fresh hit “thing” to escalate revenues. The thing that was supposed to carry it into the future (i.e. cloud-based CAD) and on which many executives pinned their hopes and millions of R&D dollars, ended up being largely uninteresting to users. So, CAD vendors are tip-toeing into AI.

Where AI Already is in CAD

AI is found in limited areas of CAD, and then sometimes might not even be AI. For instance, Bricsys, in 2016, was the first to employ the term “AI”, applying it to BIM entity detection, and a command that found and replaced structural junctions with connectors. In my opinion, this isn’t AI; it’s find-and-replace applied to groups of entities.

Today, the company says it uses AI for SmartCell copying in tables; for copying 3D solids and faces; in converting AutoCAD’s dynamic blocks to BricsCAD’s parametric blocks; and for drawing health management.

Siemens Software was next in 2019, with an AI in NX’s interface, predicting command(s) we were likely to use next. In my opinion, this isn’t AI either; it’s the MRU (most-recently-used) function, as found in other software. More recently, the company says it added AI to NX Sketch for inferring relationships between entities, as well as to Solid Edge for suggesting assembly relationships.

PTC reassures customers upfront, saying it employs artificial narrow-intelligence: “It is not a matter of machines thinking like people, but rather sophisticated algorithms designed for a pre-defined task with a well-understood set of inputs,” writes PTC market analyst, Colin McMahon. So far, the company has applied AI to IoT (Internet of Things) software in predicting downtimes in machines and customer service chatbots.

Dassault Systemes says it employs AI in data analytics and suggests that, in the future, it might use AI to enhance digital twin simulations and to generate 3D interactive environments. Solidworks says its Design Assistant uses AI.

A New Generation of AI

With firms like OpenAI making access to AI easy, several design software firms launched last year. CadAiCo, for example, says its AI-based CAD system combines machine learning with blockchains and enables payments between users and third-parties through $CAD tokens. It is apparently trained on millions of CAD models, but is not yet shipping; $CAD doesn’t seem to be listed yet.

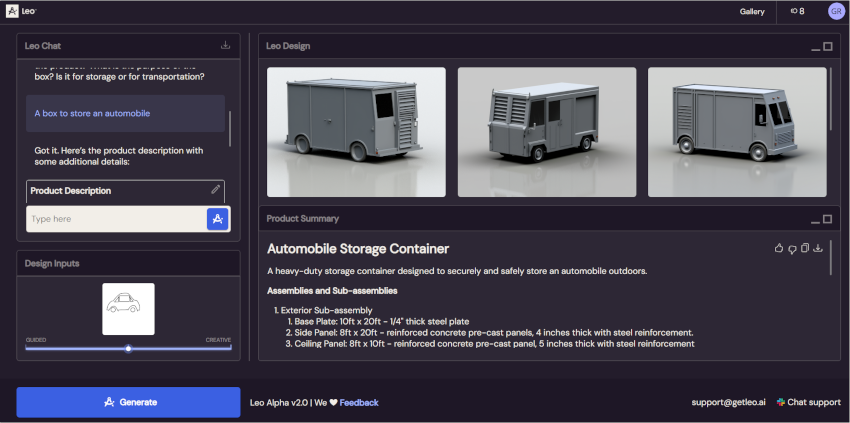

Leo.ai says its design copilot creates 3D models from sketches, specs and CAD constraints.

(Image credit: Leo AI, Ltd.)

Another newcomer is Leo, which says it uses generative AI to design parts and full assemblies “with 3D CAD models you can edit anywhere.” I asked it to design a box to store an automobile, and it returned images of standard shipping containers. When I added a sketch in the shape of a car, it updated the images to looked like container-styled vehicles. I couldn’t obtain an editable 3D model, perhaps because Leo is in still in alpha testing.

In both cases, I was unable to determine the source of the AI or the training data sets.

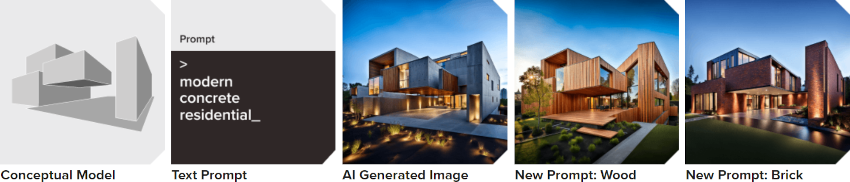

Among existing CAD vendors, Nemetschek Group’s Graphisoft released an Archicad 27 add-on that uses AI from Stable Diffusion to generate 3D visualizations during early design stages. However, Stable Diffusion is facing funding problems and is being sued by Getty Images over the alleged misuse of twelve million copyrighted images.

Stable Diffusion’s AI-based Visualizer renders images based on a 3D conceptual model in ArchiCAD.

(Image credit: Graphisoft)

Graphisoft told me that the ethical use of technology is its highest priority, and that AI Visualizer is still in the exploration phase. ArchiCAD’s Adaptative Hybrid Framework lets the company extend AI Visualizers’ options, letting customers use other AI providers, should it be necessary.

During last fall’s Autodesk University 2023, Autodesk’s CTO revealed the company has stored 40 petabytes of customers’ data.

“There is no AI without actionable data,” she said, so the company plans to merge it all into a single database called Autodesk Data Model. An Autodesk vice president then explained that the 15 billion customer files, hosted by the company, contain data like “existing conditions, past and current projects, GIS, IoT sensors, workplace management systems, stakeholder sentiments, geotechnical, pollution, etc.”

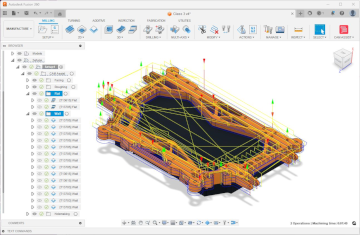

For MCAD users, AI will be added to Fusion 360, first with one-click fully-dimensioned drawings of 3D models, and automated toolpaths later. Autodesk is, however, held back by its end-user license preventing it from using AI on our data. I assume it might rewrite the EULA, presenting it to customers as a fait accompli.

Where AI Stumbles Today

As the hype over AI wears down, drawbacks are emerging.

AI has different implementations but they have similar names, which can confuse laypeople. ChatGPT (generative pre-trained transformer), for instance, is an LLM that runs a GAI, while aiming for AGI. That’s a large language model running generative-artificial intelligence (answers returned from large data sets), with the goal of artificial general-intelligence (equivalent to a human’s work of median intelligence). Amazon uses retrieval-augmented generation.

AI in Autodesk’s Fusion 360 automatically generating machine tool paths.

(Image credit: Autodesk)

AI needs huge amounts of data. AI firms scrape databases from which to generate answers. Some databases were created for AI research, such as Books3 which provides ASCII text of 187,000 books and github.tar with 100GB of programming code. The data must be tagged by humans, who don’t get paid much.

AI data scraping triggers lawsuits. Not all data is clean data. Through class action law suits, authors are suing AI firms over copyright violations. In another law suit, OpenAI is accused of using personal data that must be kept private under government law, such as scraping MyChart for medical data and private conversions from Slack and Teams.

Another law suit accuses OpenAI of reproducing programming code without credit. “Legal experts have cautioned generative AI tools could put companies at risk, if they were to unwittingly incorporate copyrighted content generated by the tools into any of products they sell,” reports TechCrunch.

AI compresses data, which leads to inaccuracies, kind of like artifacts in JPEG images. For instance, asked to give references, ChatGPT can list articles and books that do not exist or don’t discuss the topic. These malformed answers pollute older data sources with misinformation. For instance, ChatGPT told a CAD journalist this factoid about me: “In 2013, he was appointed as Director of the Open Design Alliance’s (ODA) Moscow office.” I was never an employee of the ODA, and never worked in Moscow.

AI is expensive to run. nVidia sells the hottest AI processor, the H100 GPU costing $30,000 to $40,000 each. Every AI firm needs thousands. Storing mammoth data sets on the cloud is also expensive. For OpenAI, the costs an estimated $20 million a month.

AI is bad at math. CAD is all about math, but AI does not work well with it. When I asked ChatGPT for the last ten digits of pi, it replied with three wrong answers. (The correct answer: “As pi is irrational, I don’t know.”)

AI generates poor C++ code. CAD software is mostly programmed with C++, but when contract programming group LEDAS tested ChatGPT, it found the C++ code was output badly, if at all. It also failed working with OpenCascade’s geometric kernel, making calls to non-existent methods and misusing existing ones. Python code was, however, acceptable.

Meanwhile, competitors are looking for ways to work with less data, reduce the compute cost and come up with better answers. Even so, a civil war within the AI community asks whether software should be open- or closed-source; should output from AI have no moral restrictions or have answers censored? Within AI, this is known as “alignment,” but no one knows who decides on the answers.

Half-a-decade ago, it was sufficient for CAD vendors to assert their software uses AI. However, as the limitations of AI become better understood, customers ought to know what’s operating under the hood. CAD users face an accuracy and legality conundrum: Does AI serve up fiction or non-fiction; is the response even legal? Even when responses look factual, we humans still need to confirm the output – like the last ten digits of pi.

For conceptual design and for design suggestions, AI is useful; for designs where safety and accuracy matter, it is not.

Ralph Grabowski writes on the CAD industry on his WorldCAD Access blog (www.worldcadaccess.com) and has authored numerous articles and books on CAD and other design software.