Sourcing the Best Vision System Camera

By Glen Ahearn

Motion Control camera Teledyne DALSA vision systemsFollow this how-to guide to find the best vision system camera for the price.

So you’re designing a vision system. You know your application, your performance expectations and you have a general idea of how that might translate into camera specifications and cost. But there are a lot of variables and so many ways to solve your vision requirement.

So you’re designing a vision system. You know your application, your performance expectations and you have a general idea of how that might translate into camera specifications and cost. But there are a lot of variables and so many ways to solve your vision requirement.

The easiest solution would be complete transparency – show a camera manufacturer your application and ask them what you need. Unfortunately, you can’t always do that due to confidentiality requirements. If this is the case, here’s what you need to tell a camera provider so they can help you get the best possible camera for your application.

Camera Type

In machine vision there are two primary types of cameras: Line scan and area cameras. While other types of cameras and sensors exist, most machine vision applications are addressed by one of these two broad categories.

As a good rule of thumb, ask the following question: “Will the camera be looking at a discrete component or a continuous ‘web’?” If it is a discrete component, an area camera may be a good choice. If it is a continuous web process, such as paper, aluminum, steel, glass, or fabric, a line scan camera may be the right option.

One exception to this rule, however, is if the discrete component you are looking at has very high-resolution requirements in one or both dimensions. Line scan cameras offer higher resolution than area cameras in one dimension. And by scanning the object at a greater number of lines over the length of the object, you can achieve even higher resolution in the second dimension.

Resolution

To choose the right camera for the job, it’s critical to know the resolution requirement (i.e. the number of pixels covering the field of view). Calculating the resolution requires knowing the size of the field of view and knowing the smallest object you wish to resolve.

Since we won’t be covering optics in this discussion, we will assume that the optical magnification is 1 (i.e. no magnification). This means the field of view can be directly related to the number of pixels on the camera. For example, if the field of view you want to image is 4˝ x 2˝ and you need 1,000 pixels per inch, you know you need a camera which has a minimum resolution of 4,000 x 2,000 pixels.

How do you decide how many pixels you need? Let’s look at a basic machine vision operation: Defect detection. To find defects as small as 1/100˝ x 1/100˝, most machine vision experts will tell you it is prudent to have more than one pixel cover a defect in order for reliable detection.

A good rule of thumb is to have 3 pixels in the smallest area you want to detect. This means, in our example, you want roughly one pixel for every 3/1,000˝ of area you are covering. If we are imaging a 4˝ x 2˝ field of view, and we need a pixel every 3/1,000˝, this means we need 1,200 pixels (4˝ / 3/1,000˝) x 600 pixels (2˝ / 3/1,000˝) at a minimum.

Acquisition Rate

How fast does your camera need to go? For area cameras and discrete component inspection, this is a very straightforward question. What is the rate at which your “widgets” are going by a single point in space? If the answer is 25 widgets per second, then you need an area camera whose minimum frame rate is at least 25 frames per second (fps). It is always a good idea to leave some tolerance padding on all of these specifications, so let’s say that a camera with a minimum frame rate of 30 frames per second is a good choice.

There is a second dimension to this question that involves exposure time. Not only does the camera’s frame rate need to keep up with the widget rate, but the host computer also needs time to perform image processing and make whatever decisions it needs to make (e.g. reject part). This is part of the overall “cycle time.” If the camera is being triggered at a rate of 25 fps, in the time domain that is 40 milliseconds (ms) per frame, then 40ms is then your cycle time. All the image acquisition, transfer and processing must take place within that 40ms window.

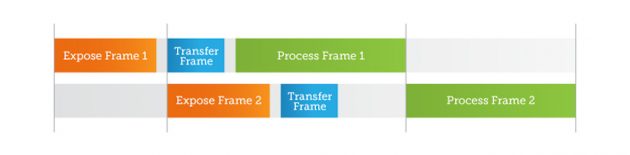

If we know it takes the host computer 20ms to process each image, and we know it takes 5ms to transfer the image from the camera to the host computer, then we know there is only 15ms left for exposure time. Some of these processes, like exposure, are serial processes, while others can be overlapped. (See Fig. 1 timeline for details).

Notice in the timeline that processing can begin on the first frame as soon as it is received, and the second frame exposure and transfer can happen in parallel with the first frame transfer and processing. However, processing cannot begin on the second frame until the processing is completed on the first frame (unless you employ multi-threaded processing with multiple core processors).

Fig. 1: Image capture and processing timeline.

For line scan cameras, speeds are specified in lines per second rather than frames per second. To calculate the minimum speed required of the line scan camera, we need to know two things: 1) resolution (which we discussed in the previous section) in the direction of motion; and 2) the speed of the object being imaged. If we need a pixel resolution of 3/1,000˝ and our object is moving at 100˝ per second, we will require a line-scan camera speed of 33,333 lines per second, or 33.3 kHz line rate (100˝ / 0.003˝ = 33,333).

Interconnect

There are multiple interconnect standards that connect cameras to host computers. Some require framegrabbers, such as RS-170, NTSC/PAL, CoaXpress, Camera Link and Camera Link High-Speed (CLHS). Others rely on interfaces that are typically built into computers and are ubiquitous, such as Gigabit Ethernet (GigE), Firewire (IEEE1394) and USB variants.

For camera manufacturers to choose the right interconnect, we need to know bandwidth requirement (which is a product of resolution and frame rate) and distance between the camera and host computer. Since analog-based interfaces are going away in favor of digital interconnections, we can say that GigE offers the greatest transmission distance, while CLHS offers the highest throughput.

Light Wavelength

Cameras vary in their sensitivity based on wavelength of light. Knowing if a particular wavelength of light is going to be used to illuminate the object in question is of critical importance to the camera manufacturer.

With laser illumination, it is easy to find the light wavelength – that will be part of the laser’s specification. It’s a bit more difficult to know the wavelength distribution of a halogen light (more toward the red end of the spectrum) or a fluorescent light (more towards the blue end of the spectrum). You can generally get the component wavelengths from the lighting manufacturer.

Quantity

Sometimes an application will require a bit of functionality that is just outside those of a standard product. It may be possible to perform a modification to the camera to make it perfect for a given application. Camera manufacturers who make a high volume of cameras may want to know what the volume potential is for a modified camera.

If the application is a “one-of-a-kind” and only requires one camera, this may limit a manufacturer’s ability to produce a custom modification. On the other hand, an application that, if successful, will require a high volume of cameras on an on-going annual basis, may be a different story.

Price

This requirement would have been listed first if these items were ranked by importance. It’s not the only concern, however, since a vision system that can’t do the job isn’t worth anything. The reason price enters into the discussion is that some of the above items are nuanced. For example, on the question of acquisition rate, customers often request that a camera run “as fast as possible,” when what they really mean is “as fast as possible within my budget.”

It’s important to be straightforward with your camera provider about your price range. The best providers are more interested in you being a satisfied customer than making an extra 20% by selling you more than you need. The more information you can provide, the happier you will be with your new camera and the price you paid for it.

Glen Ahearn is the sales and application support manager at Teledyne DALSA. For more vision system how-to articles, visit the company’s blog at www.possibility.teledynedalsa.com