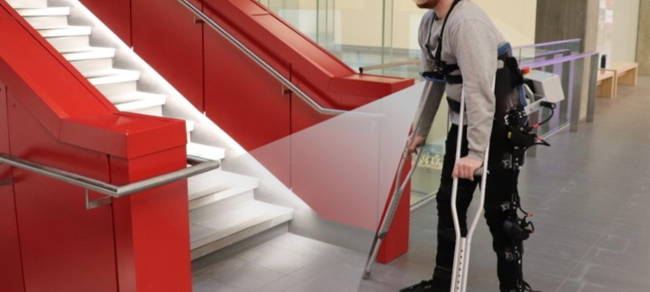

Self-walking robotic exoskeleton combines AI and wearable cameras

By DE Staff

Automation GeneralU of Waterloo robotic legs capable of thinking and making control decisions on their own.

(Photo credit: University of Waterloo)

While other such motorized systems have been developed before, they are typically manually controlled by joysticks or smartphone applications. ExoNet improves on this basic design by fitting the exoskeleton with wearable cameras paired with optimized AI computer software to process the video feed to accurately recognize stairs, doors and other features.

“That can be inconvenient and cognitively demanding,” said PhD candidate in systems design engineering and ExoNet research lead, Brokoslaw Laschowski. “Every time you want to perform a new locomotor activity, you have to stop, take out your smartphone and select the desired mode.”

The next phase of the ExoNet research project will involve sending instructions to motors so that robotic exoskeletons can climb stairs, avoid obstacles or take other appropriate actions based on analysis of the user’s current movement and the upcoming terrain. The researchers are also working to improve the energy efficiency of the exoskeletons’ motors by using human motion to self-charge the batteries.

“Our control approach wouldn’t necessarily require human thought,” said PhD candidate in systems design engineering and ExoNet research lead, Brokoslaw Laschowski. “Similar to autonomous cars that drive themselves, we’re designing autonomous exoskeletons that walk for themselves.”

The latest in a series of papers on the related projects, Simulation of Stand-to-Sit Biomechanics for Robotic Exoskeletons and Prostheses with Energy Regeneration, appears in the journal IEEE Transactions on Medical Robotics and Bionics.

Waterloo.ai